Multi-cloud offers companies the promise of increased choice, higher availability, and reduced risk for their platform and infrastructure needs. However, their production ops teams must grapple with the increased complexity and pain of managing across multiple clouds. Shoreline’s platform hides this complexity, eliminates the pain, and makes multi-cloud operations much easier on the team!

Even single-cloud operations are hard. Resources are distributed across multiple clusters, regions, and accounts. There isn’t a unified way to query and act on resources across the system. You have to navigate the credentials of each kubernetes cluster. Now layer on top the different credentialing systems of multiple cloud providers, and it’s clear why you need a plan on how to manage all the various credentials securely. Shoreline provides a much more simplified access experience.

One Resource Inventory System across all clouds

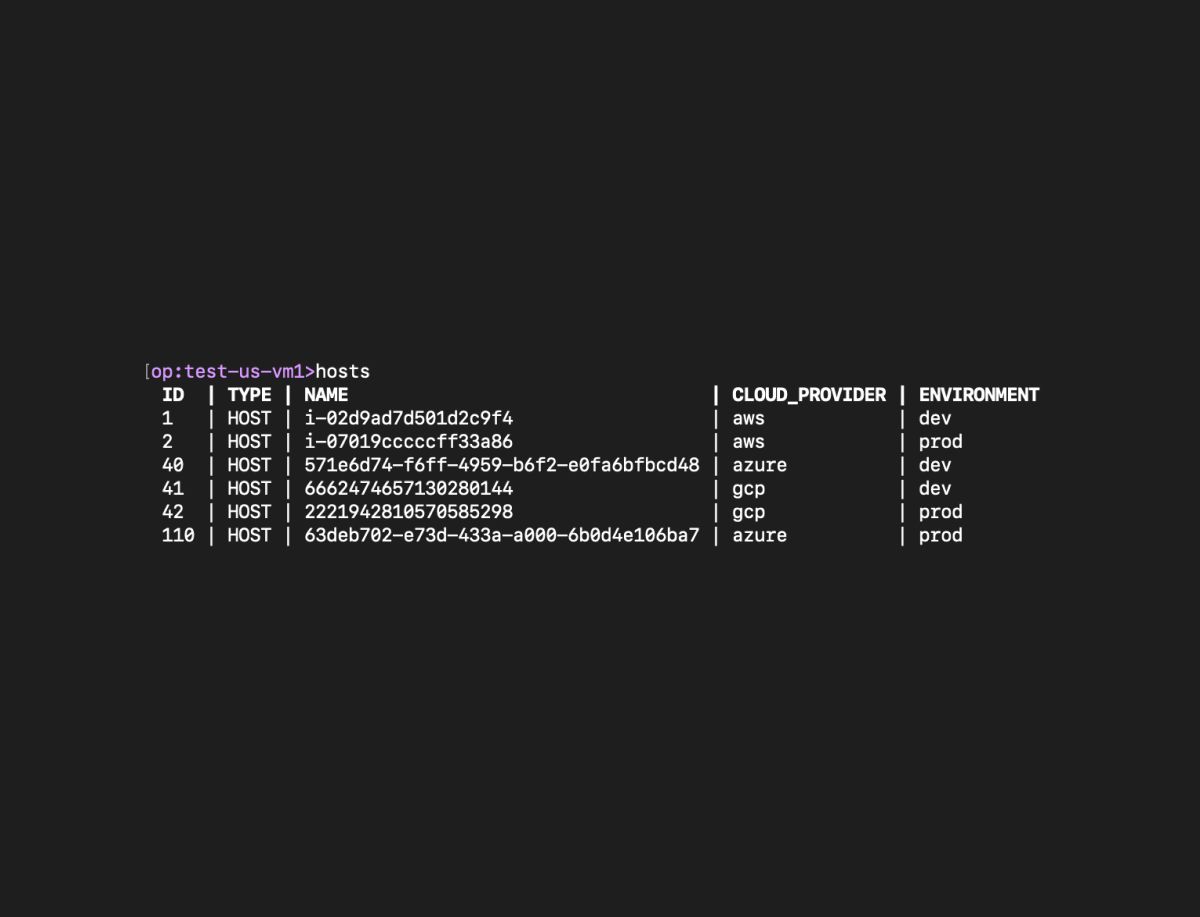

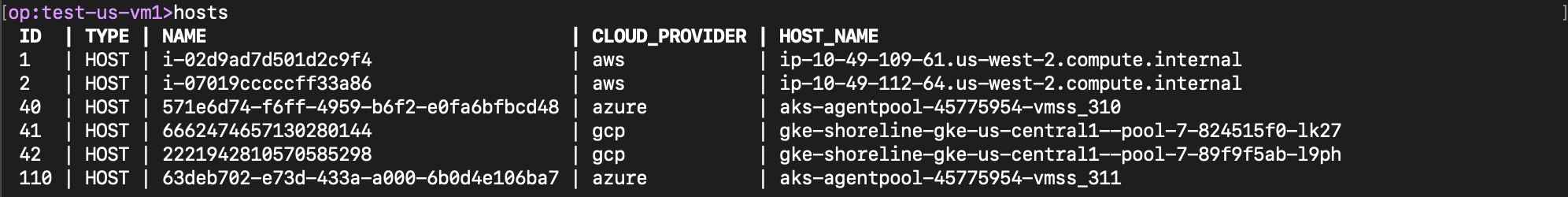

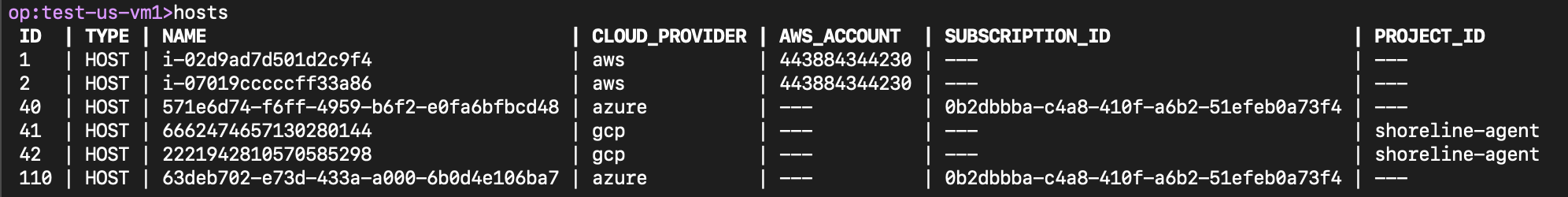

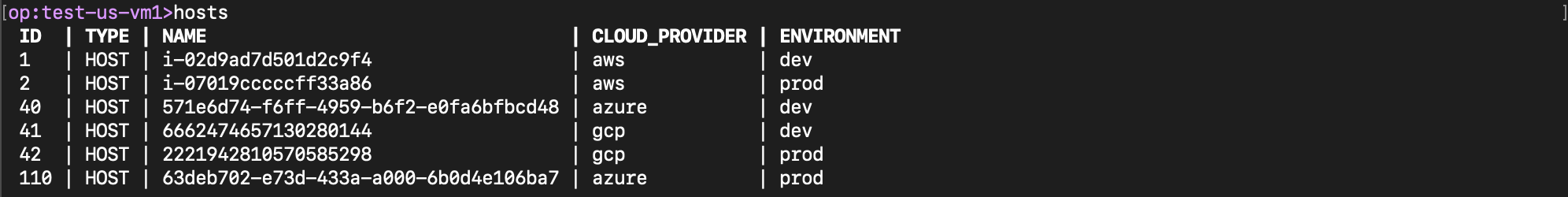

In the Shoreline CLI or web UI, you can see all the resources you are managing across all clouds. Resources such as hosts, pods, and containers are discovered automatically. The tags available on these resources in the cloud provider’s inventory are also picked up. You can find resources of interest using these tags using Shoreline Op’s search and filter capabilities.

For example, here we have a common tag called “host_name” which is available for each host across AWS, GCP, and Azure.

Certain tags are unique to each cloud provider. For example, AWS account id is specific to AWS, Azure subscription id specific to Azure, and GCP project id specific to GCP. Note that the Shoreline Op CLI can readily be customized to show the tags of interest.

Each cloud provider has its own way of adding custom tags to resources. An operator might configure their CI/CD pipelines to tag the resources with the environment tag of “dev”, “qa”, or “prod”. These custom tags are also picked up by Shoreline from each cloud.

One way to run interactive commands across clouds - It’s like SQL for operations!

Through the same interface, you can run interactive commands spanning hosts, clusters, and clouds. You don’t have to connect to each kubernetes cluster separately using a separate kube config context, and then exec into each pod separately. You don’t have to generate the kube config for each cloud in a different way. You also don’t have to SSH to each host separately using different SSH keys. Or if you are using the console, you don’t have to log in with different credentials for different accounts, or constantly toggle between different GCP projects or Azure subscriptions or AWS regions.

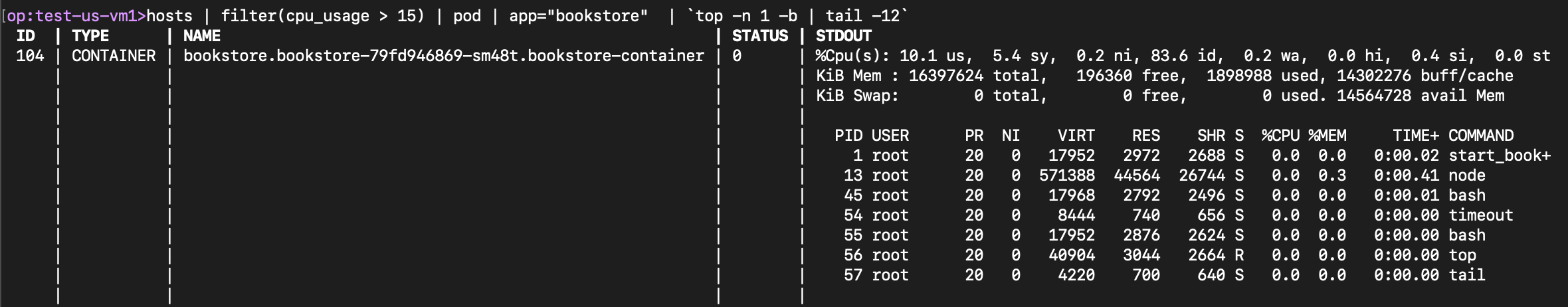

Below I executed a single Op statement in Shoreline to run the Linux top command to see top processes on all my Bookstore app pods across all Kubernetes clusters, regions, and clouds, which were running on hosts with more than 15% cpu usage.

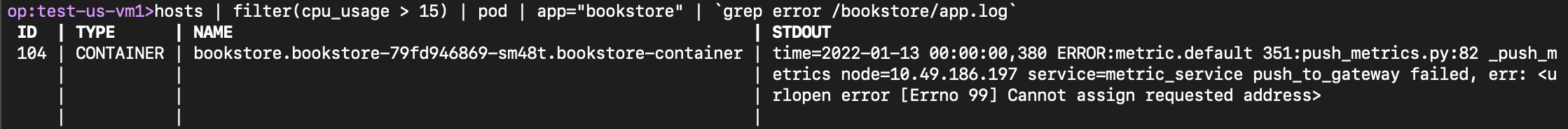

I also wanted to see the errors in the app logs on the Bookstore containers in real time. It was just another effortless one liner.

Unified way to automate ops across clouds

Operations by their nature are quite dynamic. The software and infrastructure evolves and new operational issues keep coming up. It is critical to keep runbooks updated and keep up the pace of automation so that the operations remain sustainable and scalable. During an on-call incident, the speed at which an operator can gather insightful data across resources and clouds, debug the issue and carefully repair by executing the appropriate cloud provider specific APIs is the determining factor for the duration of the downtime and resulting customer impact.

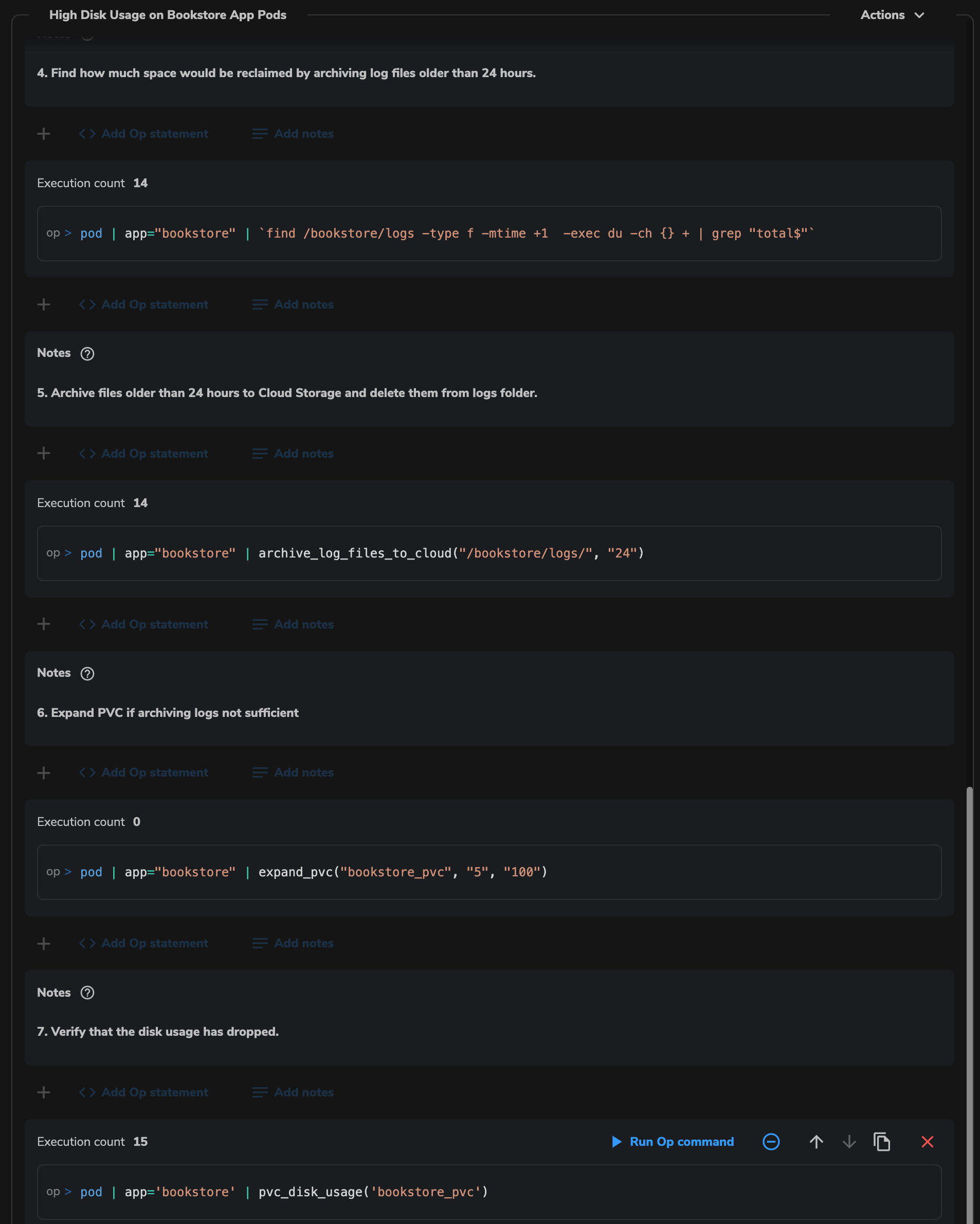

You don’t need to have separate runbooks for each cloud. Below I have an example of a Shoreline Notebook for responding to a high disk usage alarm on Bookstore app pods running on multiple clouds. I can see which resources are impacted. I can archive log files older than a day to cloud provider specific storage and delete them from local disk. If needed and if supported by the cloud provider (without any downtime for the host), I can expand the cloud provider specific elastic disk volume being used by the host. I can do all of these with single clicks.

Once I know that a repeating issue would always need the same automated remediation action, I can create a Shoreline bot which would execute the action on the impacted resource(s) automatically whenever the alarm fires, so that I don’t have to wake up in the middle of the night. I don’t need to create a separate alarm, action, and bot for each cloud to make this happen.

Takeaway

Multi-cloud or even multi-cluster/region/account operations can be daunting, but it doesn’t have to be. You can have the luxury of a single pane of glass to access your resources, run interactive commands across them, and automate operations across clouds. You don’t need every SRE in your team to be an expert in every cloud.